Why testing agents is so hard

Some of the top challenges include:

Non-deterministic outputs

The underlying issue at hand is that agents are non-deterministic. The same input goes in, two different outputs can come out.

How do you test for an expected outcome when you don’t know what the expected outcome will be? Simply put, testing for strictly defined outputs doesn’t work.

Unstructured outputs

The second, and less discussed, challenge of testing agentic systems is that outputs are often unstructured. The foundation of agentic systems are large language models after all.

It is much easier to define a test for structured data. For example, the id field should never be NULL or always be an integer. How do you define the quality of a large field of text?

Cost and scale

LLM-as-judge is the most common methodology for evaluating the quality or reliability of AI agents. However, it’s an expensive workload and each user interaction (trace) can consist of hundreds of interactions (spans).

So we rethought our agent testing strategy. In this post we’ll share our learnings including a new key concept that has proven pivotal to ensuring reliability at scale.

Testing our agent

We have two agents in production that are leveraged by more than 30,000 users. The Troubleshooting Agent combs through hundreds of signals to determine the root cause of a data reliability incident while the Monitoring Agent makes smart data quality monitoring recommendations.

For the Troubleshooting agent we test three main dimensions: semantic distance, groundedness, and tool usage. Here is how we test for each.

Semantic distance

We leverage deterministic tests when appropriate as they are clear, explainable, and cost-effective. For example, it is relatively easy to deploy a test to ensure one of the subagent’s outputs is in JSON format, that they don’t exceed a certain length, or to make sure the guardrails are being called as intended.

However, there are times when deterministic tests won’t get the job done. For example, we explored embedding both expected and new outputs as vectors and using cosine similarity tests. We thought this would be a cheaper and faster way to evaluate semantic distance (is the meaning similar) between observed and expected outputs.

However, we found there were too many cases in which the wording was similar, but the meaning was different.

Instead, we now provide our LLM judge the expected output from the current configuration and ask it to score on a 0-1 scale the similarity of the new output.

Groundedness

For groundedness, we check to ensure that the key context is present when it should be, but also that the agent will decline to answer when the key context is missing or the question is out of scope.

This is important as LLMs are eager to please and will hallucinate when they aren’t grounded with good context.

Tool usage

For tool usage we have an LLM-as-judge evaluate whether the agent performed as expected for the pre-defined scenario meaning:

- No tool was expected and no tool was called

- A tool was expected and a permitted tool was used

- No required tools were omitted

- No non-permitted tools were used

The real magic is not deploying these tests, but how these tests are applied. Here is our current setup informed by some painful trial and error.

Agent testing best practices

It’s important to keep in mind not only are your agents non-deterministic, but so are your LLM evaluations! These best practices are mainly designed to combat those inherent shortcomings.

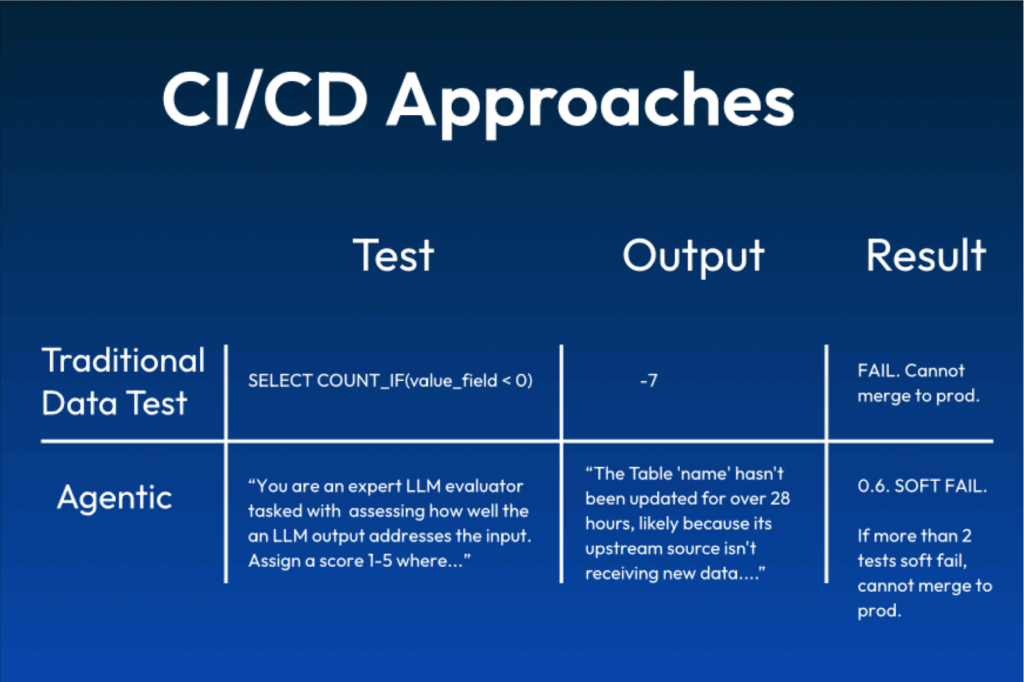

Soft failures

Hard thresholds can be noisy with non-deterministic tests for obvious reasons. So we invented the concept of a “soft failure.”

The evaluation comes back with a score between 0-1. Anything less than a .5 is a hard failure, while anything above a .8 is a pass. Soft failures occur for scores between .5 to .8.

Changes can be merged for a soft failure. However, if a certain threshold of soft failures is exceeded it constitutes a hard failure and the process is halted.

For our agent, it is currently configured so that if 33% of tests result in a soft failure or if there are any more than 2 soft failures total, then it is considered a hard failure. This prevents the change from being merged.

Re-evaluate soft failures

Soft failures can be a canary in a coal mine, or in some cases they can be nonsense. About 10% of soft failures are the result of hallucinations. In the case of a soft failure, the evaluations will automatically re-run. If the resulting tests pass we assume the original result was incorrect.

Explanations

When a test fails, you need to understand why it failed. We now ask every LLM judge to not just provide a score, but to explain it. It’s imperfect, but it helps build trust in the evaluation and often speeds debugging.

Removing flaky tests

You have to test your tests. Especially with LLM-as-judge evaluations, the way the prompt is built can have a large impact on the results. We run tests multiple times and if the delta across the results is too large we will revise the prompt or remove the flaky test.

Monitoring in production

Agent testing is new and challenging, but it’s a walk in the park compared to monitoring agent behavior and outputs in production. Inputs are messier, there is no expected output to baseline, and everything is at a much larger scale.

Not to mention the stakes are much higher! System reliability problems quickly become business problems.

This is our current focus. We’re leveraging agent observability tools to tackle these challenges and will report new learnings in a future post.

The Troubleshooting Agent has been one of the most impactful features we’ve ever shipped. Developing reliable agents has been a career-defining journey and we’re excited to share it with you.

Michael Segner is a product strategist at Monte Carlo and the author of the O’Reilly report, “Enhancing data + AI reliability through observability.” This was co-authored with Elor Arieli and Alik Peltinovich.