Inference

-

Tips for accelerating AI/ML on CPU — Part 2

11 min read -

Flyin’ Like a Lion on Intel Xeon

20 min read -

The counterintuitive approach to AI optimization that’s changing how we deploy models

20 min read -

It’s like grading papers, but your student is an LLM

12 min read -

Optimizing highly parallel AI algorithm execution

11 min read -

Take a dive into the foundations and exemplifying use cases of the Poisson distribution

17 min read -

How LLMs Work: Pre-Training to Post-Training, Neural Networks, Hallucinations, and Inference

Large Language ModelsWith the recent explosion of interest in large language models (LLMs), they often seem almost…

9 min read -

Implementing Speculative and Contrastive Decoding

8 min read -

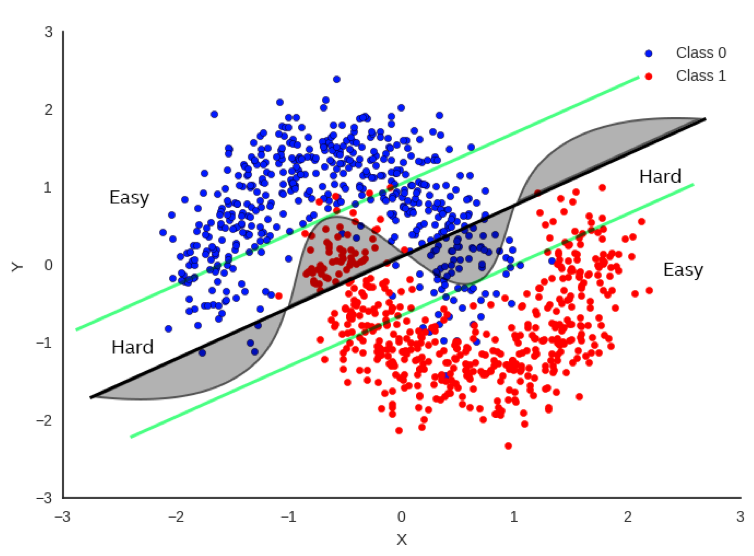

Getting your AI task to distinguish between Hard and Easy problems

12 min read -